Urban Analytics Lab

About us

We are developing quantitative methods and tools that leverage emerging geospatial data and AI to sense the form, function, and human experience of cities.

Watch the video below or read more here.

Established and directed by Filip Biljecki, we are proudly based at the Department of Architecture at the College of Design and Engineering of the National University of Singapore, a leading global university centered in the heart of Southeast Asia. We are also affiliated with the Department of Real Estate at the NUS Business School.

News

Updates from our group

Recent publications

Full list of publications is here.

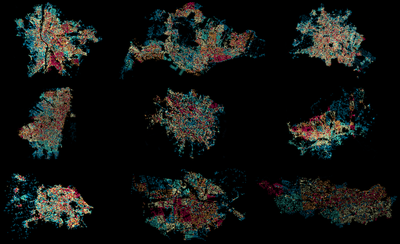

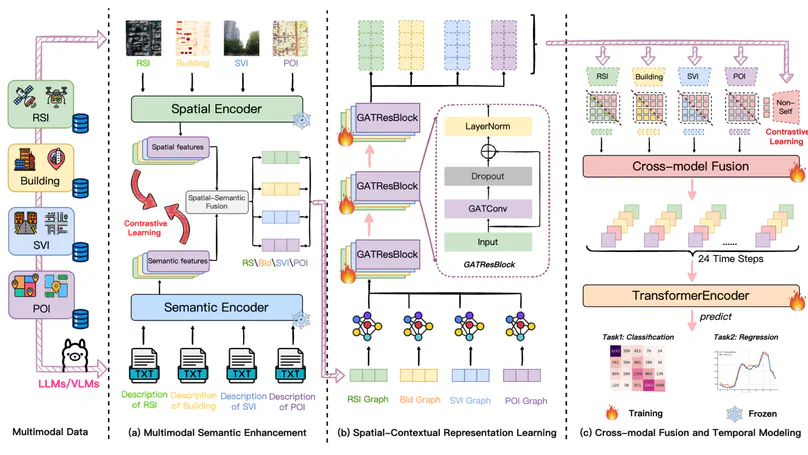

Accurately predicting fine-grained urban mobility is essential for optimizing transportation, accessibility, and urban management. However, existing approaches often depend on dynamic data such as trajectories or signaling records, which are sparsely available across cities, thereby limiting their applicability and generalizability to new urban contexts. To address these limitations, this study proposes a Large Model Enhanced Multimodal Representations (LMEMR) framework to learn hourly grid-level mobility dynamics solely from static geospatial data—including remote sensing imagery, building data, street view imagery, and points of interest—which are widely accessible. Large vision–language models are employed to generate natural-language descriptions of each modality, enriching the data with human-understandable semantics. A dual-level contrastive learning strategy aligns raw and textual features both within and across modalities, mitigating semantic gaps and enhancing multimodal consistency. Spatial dependencies are modeled through a graph attention network, and temporal dynamics are captured via a transformer encoder to produce 24-hour mobility sequences. Results from Shenzhen demonstrate that LMEMR outperforms the baseline CLIP model, achieving an $R^{2}$ of 0.856and an 18.07% reduction in MAE. Ablation experiments confirm the effectiveness of semantic enhancement, spatial graph reasoning, and cross-modal fusion. Overall, this research reveals the potential of static multimodal data for dynamic mobility inference, offering a scalable, interpretable, and privacy-friendly solution for smart city planning and management.

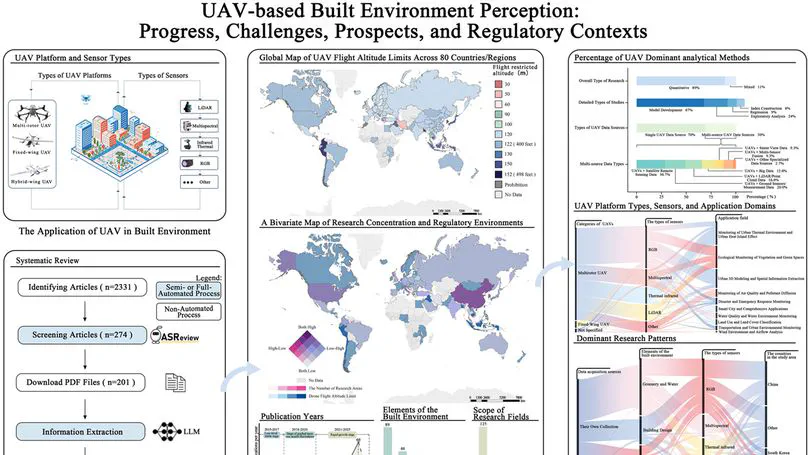

Uncrewed Aerial Vehicles (UAVs) have proven to be a transformative technology for the fine-scale perception of the built environment. The recent proliferation of new platforms, sensors, and algorithms creates unprecedented opportunities to understand a complex environment. However, effectively harnessing these opportunities requires a systematic assessment of the emerging methodological practices to address challenges concerning the comparability, reproducibility, and generalizability of the knowledge being produced. Therefore, this study aims to systematically map the dominant methodological workflows in UAV-based built environment perception and critically assess their implications for scientific knowledge production. We conducted a systematic review of 201 peer-reviewed articles in the last decade (2015–2025), complemented by the construction of a novel global dataset of UAV flight policies across 80 countries, to deconstruct the dominant research workflows and to synthesize the progress and challenges across key application domains. Our analysis, leveraging a novel method that integrates PRISMA, machine learning, and Large Language Models, reveals a pronounced convergence in research practices, which stands in contrast to the apparent diversity of available technologies. We determine that the state of the art is characterized by: (i) a geographical concentration of studies in the Global North, correlated with permissive regulatory environments; (ii) a technological path dependency on a ‘standardized toolkit’ of multirotor UAVs and RGB sensors; and (iii) a methodological reliance on self-collected data (91%) that often remains non-public, fostering a research ecosystem of quantitative, computer vision-based analysis. By diagnosing these dominant patterns and their associated challenges, we propose a forward-looking agenda centered on fostering open science, diversifying technologies, and expanding methodological horizons to build a more integrated, robust, and equitable research future.

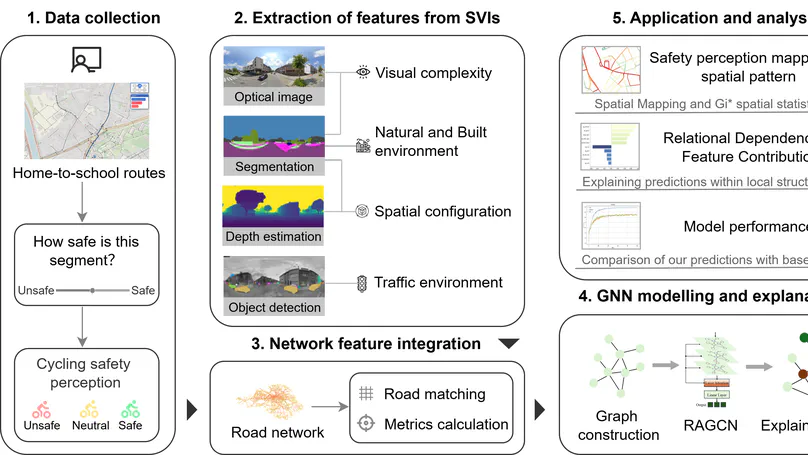

Perceived cycling safety remains a critical determinant of bicycle use among adolescents. Previous studies have highlighted the role of street environments in shaping safety perceptions, but most rely on spatial attributes (e.g., road infrastructure, land-use indices) and rarely incorporate the cyclists’ visual perspective. This study proposes a multidimensional framework that integrates visual and spatial representations of urban streets to model perceived cycling safety. By embedding fine-grained visual indicators derived from street view imagery into the road network, this novel framework captures 31 features across six environmental dimensions. Existing studies typically model perceived cycling safety using only a road’s own attributes, neglecting the influence of nearby roads. To address this limitation, we develop an improved Graph Convolutional Network that incorporates geographic context. It integrates layer-wise attention and an adaptive loss function to handle class imbalance and capture spatial dependencies. Explainable artificial intelligence (XAI) techniques are applied to interpret feature importance within the spatial context, moving beyond linear assumptions of traditional models. The framework is applied to a perception survey focusing on adolescents in Ghent, Belgium. The proposed model achieves an overall accuracy of 83.1%, outperforming all baselines and presenting a major advancement in this domain. XAI analysis reveals that both texture complexity and color monotony of the built environment tend to reduce perceived cycling safety, while tree coverage has a positive effect. Overall, the framework offers an interpretable and scalable approach for mapping street-level safety perception, providing actionable insights for cycling-oriented urban design and the development of sustainable transport planning.

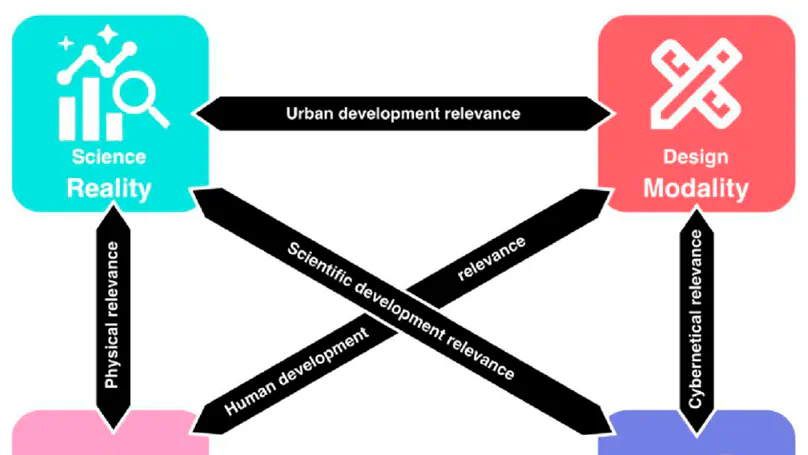

Architecture, engineering, and construction have increasingly integrated automated tools and digital approaches for urban analysis and design. Many of these approaches are tailored towards either urban Design or urban Science, despite both being central to our understanding of cities. The separation between these two core aspects of urban development introduces multidisciplinary challenges when addressing the consequences of urban phenomena. We propose the paradigm of Semantic Urban Elements (SUEs) to combine Science-based and Design-based knowledge about potential solutions to complex urban issues, integrating advances in scientific and design thinking about such solutions using formal, open knowledge representation frameworks. We first present current problems by briefly discussing the relevant state of the art in urban Science and urban Design. Second, we derive the key characteristics that a combined urban Design+Science approach would require. We then posit a definition of SUEs and provide an illustrative example, followed by a contextualization of our proposition and evaluate existing work through the lens of our proposed paradigm. The novel SUEs method is an enabling infrastructure that supports iterative, evidence-informed design exploration and transparent evaluation. In this way, SUEs responds to the digital revolution in urban quantification by integrating Design and Science and provides the necessary framing lens to tackle different challenges in cities in a way that benefit cities, humans, and their future, considering their mutual relationships.

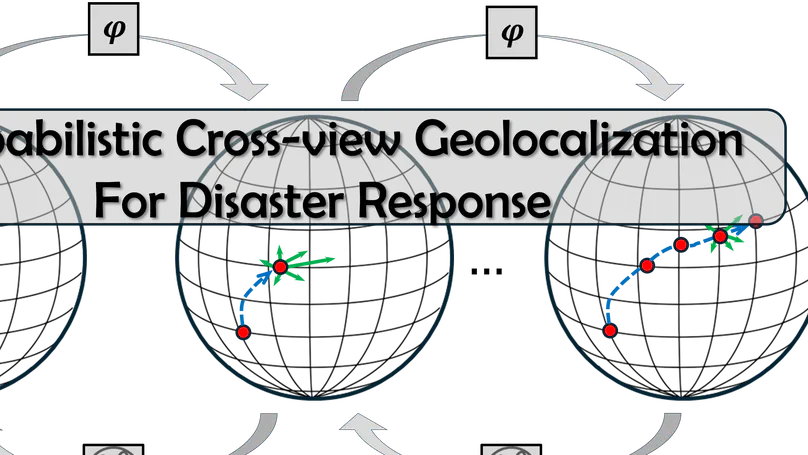

As Earth’s climate changes, it is impacting disasters and extreme weather events across the planet. Record-breaking heatwaves, drenching rainfalls, extreme wildfires, and widespread flooding during hurricanes are all becoming more frequent and more intense. Rapid and efficient response to disaster events is essential for climate resilience and sustainability. A key challenge in disaster response is to accurately and timely identify disaster locations to support decision-making and resources allocation. In this paper, we propose a Probabilistic Cross-view Geolocalization approach, called ProbGLC, exploring new pathways towards generative location awareness for rapid disaster response. Herein, we combine probabilistic and deterministic geolocalization models into a unified framework to simultaneously enhance model explainability (via uncertainty quantification) and achieve state-of-the-art geolocalization performance. Designed for rapid diaster response, the ProbGLC is able to address cross-view geolocalization across multiple disaster events as well as to offer unique features of probabilistic distribution and localizability score. To evaluate the ProbGLC, we conduct extensive experiments on two cross-view disaster datasets (i.e., MultiIAN and SAGAINDisaster), consisting diverse cross-view imagery pairs of multiple disaster types (e.g., hurricanes, wildfires, floods, to tornadoes). Preliminary results confirms the superior geolocalization accuracy (i.e., 0.86 in Acc@1km and 0.97 in Acc@25km) and model explainability (i.e., via probabilistic distributions and localizability scores) of the proposed ProbGLC approach, highlighting the great potential of leveraging generative cross-view approach to facilitate location awareness for better and faster disaster response. The data and code is publicly available at https://github.com/bobleegogogo/ProbGLC.

Contact

- [email protected]

- SDE4, NUS College of Design and Engineering, 8 Architecture Dr, Singapore, Singapore 117564

- X